How I use FlowDeck to let my AI agent build and run my apps

A while back, I posted on X about how folks manage the context windows for their AI agents. In response to that post, the person who built FlowDeck reached out and asked if I'd give it a try. My workflow was mostly fine as-is (build with Cursor, tell it to check builds with xcodebuild, and go to Xcode to actually build and run) but a simple CLI that would provide better output to my agent (less context pollution) and a nice way to interact with my apps from the CLI sounded interesting enough to give it a try.

I installed FlowDeck, integrated it for Maxine's Xcode project, and within a day or two it became the thing my agent reaches for by default. I haven't really thought about it since, which is the best thing I can say about a developer tool. I'm not the kind of person that enjoys tweaking their setup endlessly so whenever I integrate something in my workflow, it has to fit in quickly and the tool has to work reliably.

This post is about how FlowDeck has come to fit that requirement, and why I think it makes a lot of sense if you're using AI agents to work on your iOS projects.

You'll learn why, in comparison with FlowDeck, using raw xcodebuild and Xcode's built-in MCP can be painful in the first place. We'll go over what FlowDeck changes, how I set it up (including the rule I added to my agents.md), and how it compares to alternatives like XcodeBuildMCP.

If you haven't read my post on setting up a delivery pipeline for agentic iOS projects, I recommend that you give that a read too. It'll help you gain a more complete understanding of my development setup and my way of working.

Understanding how xcodebuild can fall short

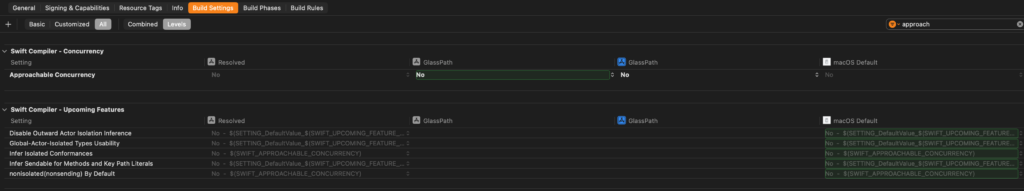

Without FlowDeck, my agents would run the xcodebuild command for builds and tests, xcrun simctl for anything simulator-related, and xcrun devicectl when a real device was involved. Once it became available in Xcode and I set up its MCP, my agents would also try to use Xcode's own MCP bridge through xcrun mcpbridge whenever they thought it was relevant. Essentially, I let the agent figure out what to do, how to do it, and when to do it. This worked mostly fine for finding compilation errors and running tests, but it basically never really worked well when I wanted to run (and debug) my app.

At the core each of those tools works. However, using them can be complex, and unless instructed properly it's really hard for an agent to (efficiently) figure out what the right thing to do is. This can quickly fill up your agent's context which, in the end, results in an agent that burns its IQ points faster than it burns tokens.

Even if the agent would know exactly which tools to call, how to call them, and get it right every single time, xcodebuild output is huge and unstructured. A failed build dumps hundreds of lines of compiler noise, and the agent has to figure out what's relevant. xcrun simctl has a different command shape for every task, and figuring out which UDID to target is its own little side quest. These things work, and if an agent is working on a long running task anyway you might not care about efficiency, but the context window fills up fast and it fills up with uninteresting noise.

When I tried the Xcode MCP bridge, I did initially hope that the context window problem would be solved (and in a way, it seems to be) but using it can be frustrating. It requires Xcode to be open, and every time a new agent process connects, macOS throws up an "Allow external agents to use Xcode tools" alert. I'm fairly sure this was supposed to be a thing of the past in Xcode 26.4 but I'm still approving connections every day when I use the MCP. (I have some projects set up to not use FlowDeck because they're being worked on by others who don't use FlowDeck yet.)

If you've ever used Codex with Xcode's MCP, you might know what I mean. There's a long thread on the Codex repo where people describe being re-prompted over and over as the app spawns new MCP subprocesses. Apple's own documentation on external agents explains why this happens, but explaining it doesn't make the dialogs any less annoying.

None of this is broken, exactly. It's just a lot of friction for a workflow that's supposed to be hands-off.

Exploring what FlowDeck does differently

FlowDeck is a single CLI that wraps xcodebuild, simctl, devicectl, and the other tools behind one consistent, easy to use, interface. It still calls Apple's tools under the hood, it just cleans up the parts my agent (or I) have to interact with.

The first thing that matters is output. When the agent runs flowdeck build --json, it gets structured events instead of a wall of text. A build failure shows up as a single error object with the file, line, column, and message. An agent can parse that and go fix it, instead of grepping through a log that might get truncated halfway through. This helps keep the context window cleaner which is a really good thing.

The second thing is configuration. You save your project settings once:

flowdeck config set -w Maxine.xcodeproj -s Maxine -S "iPhone 16"Or, if you're not exactly sure what you should pass to flowdeck config, just use flowdeck -i and it'll walk you through a couple of questions that build your config. After that, it presents you with hotkeys for building, running, testing, and more.

After that, the agent just runs flowdeck build, flowdeck run, or flowdeck test. No flags, no destination strings, no guessing which scheme to use; these will all be read from your config.

Running your apps and exploring logs can be done with a separate command: flowdeck run --log. This command launches the app and streams its output. You can even attach to a running app with flowdeck logs <app-id> if you didn't start it with --log in the first place. It captures both print() and OSLog output without any xcrun simctl spawn commands.

My favorite thing is that I don't actually have to remember these commands when I manually interact with FlowDeck. When I run my app, FlowDeck lists the commands I can execute (while the app is running) to inspect logs for example.

The feature I did not expect to care about but absolutely do is flowdeck ui simulator session start. It stores a screenshot and an accessibility tree on disk every 500 milliseconds. When my agent is testing a UI change, it can read those files and see what's on screen. Before this, the agent was guessing at whether its change actually worked. Now it just checks. This means that, with some practice, you can start setting up your agent and app to validate your UI changes and iterate on them as needed.

That said, I haven't figured out how to use this to make sure things look good. It's a great functionality test, but agents just don't know how a good UX feels, or when a design looks right. These are skills that are, in my opinion, still very human, so I'm not expecting an agent to get things 100% right on the first try. I often tweak UI and UX by hand after the agent has made things functional.

There's a similar set of commands for macOS apps under flowdeck ui mac, but I mostly use the iOS side.

None of this is magic by the way. It's all built on existing commands that would be a pain to chain together by hand. FlowDeck just makes it easy.

Setting up FlowDeck as your agent's go-to tool

Once FlowDeck is installed and you've activated your license / trial, you're able to install the agent skill that comes with FlowDeck. I use Cursor, so I can add the skill to my project using a command like the one below:

flowdeck ai install-skill --agent cursor --mode projectThis drops a skill file into .cursor/skills/flowdeck/ that teaches my agent the FlowDeck commands and the order to run them in. On its own, this is already enough to make Cursor prefer FlowDeck for anything Xcode-related.

But I went one step further, and this is where it connects back to my delivery pipeline post. In that post I talked about agents.md being a living document that you update whenever your agent does something you don't like. This is exactly the kind of thing that belongs in it.

I added a short rule to my agents.md that looks roughly like this:

## Apple platform tooling

- Use FlowDeck for all build, run, test, simulator, device, and log tasks

- Do not call `xcodebuild`, `xcrun simctl`, or `xcrun devicectl` directly

- Start with `flowdeck config get --json` to check the saved project config

- Use `flowdeck run --log` when you need to see runtime outputThese four lines, combined with the skill pack, make it so that my agent reaches for FlowDeck automatically whenever I ask it to build, test, or run something. Which, in fact, is before the agent is even allowed to ask me to review code; I don't want to review code only to realize later that it doesn't build or that tests fail. I never say "use FlowDeck" in my prompts. The skill combined with my agents file makes sure that agents use FlowDeck over xcodebuild.

That's what I mean when I say I stopped thinking about it. Setting it up took maybe 10 minutes, and I've barely thought about it since because it's just the default option now.

As needed, you can set up more specific configurations like telling FlowDeck to run in a special CI mode, or pointing it at a specific Derived Data path (which can be useful in parallel multi-agent workflows).

How it compares to XcodeBuildMCP

While reading this, you might be thinking "this sounds very similar to XcodeBuildMCP". XcodeBuildMCP is a product that's currently owned and maintained by Sentry (after being acquired), it's open source, and it covers a lot of ground. Simulator, device, macOS, SwiftPM, UI automation, even LLDB-based debugging. If you want an MCP-native free option, this is the one I'd pick.

Where it differs from FlowDeck isn't really the feature list, they solve the same problems in lots of cases. The difference is that XcodeBuildMCP was mainly an MCP server at the time I looked into it first. That meant another process running alongside your editor, tool schemas that your agent has to negotiate with, and response size limits to work around. There are pros and cons for MCPs versus skill files that I won't go into in this post. If MCP is a paradigm that you like, then XcodeBuildMCP might be a better choice for you. If a CLI with skill files is your preferred system, then FlowDeck makes more sense for you.

That said, these days XcodeBuildMCP recommends their CLI mode which makes it a lot more similar to FlowDeck than it originally was. If you're trying to decide between these two tools, I recommend that you give them both a try and see which one makes the features you need the easiest to use. My experience with XcodeBuildMCP is very limited, mainly because FlowDeck fell into my lap and I had no reason to try something else.

There's one interesting benefit I've found with FlowDeck being a CLI though. I can run the same commands my agent runs. This isn't something I need to do often, but the fact that there's a CLI I can interact with means that I can test and try workflows without involving an agent which is quite useful, especially when you're exploring features.

Both tools work. Pick what fits your setup. FlowDeck just fits mine better.

Summary

In this post, you learned about FlowDeck and how it's changed the way I run my builds outside of Xcode without needing to have Xcode open at all. I explained that FlowDeck helps me save valuable context window space by providing parsed, structured responses to my agent's commands which makes processing build output more efficient, and less token-heavy.

You also learned that FlowDeck can directly interact with the simulator through the accessibility tree that the simulator exposes. This is incredibly useful as a way to let AI agents test their code, and to validate that things are functional. It's not a tool that magically gives your agent eyes and a sense of taste, but that doesn't make it any less useful.

If you've been building out an agentic iOS workflow, FlowDeck is a tool that I highly recommend you check out. If nothing else, it helps your agents process build output while saving valuable tokens without breaking or needing tons of tinkering to set up.